Example of ERP/CRM Order processing and Social data landing on Snowflake on Azure with integrated dashboards to leverage.(SAP specific scenario, we going to explore in this blog) SAP data to Snowflake Cloud Data warehouse on Azure.

High-Level Architecture scenarios on Azure

To learn ADF’s overall support on SAP data integration scenario, see SAP data integration using Azure Data Factory whitepaper with a detailed introduction on each SAP connector, comparison, and guidance.Ģ.

Using snowflake pro to write a script trial#

You always receive a 30-Day Free TriĪl with $400 credit from Snowflake while opening a new trial account to explore and test your data, and it’s a huge advantage for anyone looking to explore for the first time, just like me! But sometimes, you also have to export data from Snowflake to another source, for example providing data for third parties. Once you’ve configured your account and created some tables using your Snowflake account, you most likely have to get data into your data warehouse. The aim is to load our SAP data on Snowflake in batches or near to real-time option using Azure Data Factory using the plug & play method.įor newbies to the Snowflake, it is a cloud-based data warehouse solution offered on all big hyperscalers like Microsoft Azure, AWS & GCP. Snowflake connector is the latest one added and available as well. Azure Data Factory provides 90+ built-in connectors allowing you to easily integrate with various data stores regardless of the variety of volume, whether they are on-premises or in the cloud. We know that without data, you have nothing to load into your system and nothing to analyze. It delivers the most significant value when your data pipelines provide the fuel to power analytics efforts. There are dozens of native connectors for your data sources and destinations from on-prem file systems and databases, such as Oracle, DB2, and SQL Server to applications such as Dynamics 365, and Salesforce to cloud solutions such as AWS S3, Azure Data Lake Storage, and Azure Synapse. It allows developers to build ETL/ELT data processes called pipelines, with drag and drop functionality using numerous pre-built activities. I’m going to leverage my favorite Azure Service – Azure Data Factory(ADF) – Which is Microsoft’s fully managed ‘serverless data integration tool. To connect or build what can sometimes be fairly complex ETL/ELT data pipelines, enterprises prefer to use modern tools like Azure Data Factory, Talend, Stitch, Qlik, and many more… Depending on your architecture and data requirements, you might choose one or multiple ETL/ELT tools for your use case. To get insights into this data, you’d extract and load the data from various sources into a data warehouse or data lake.

Using snowflake pro to write a script full#

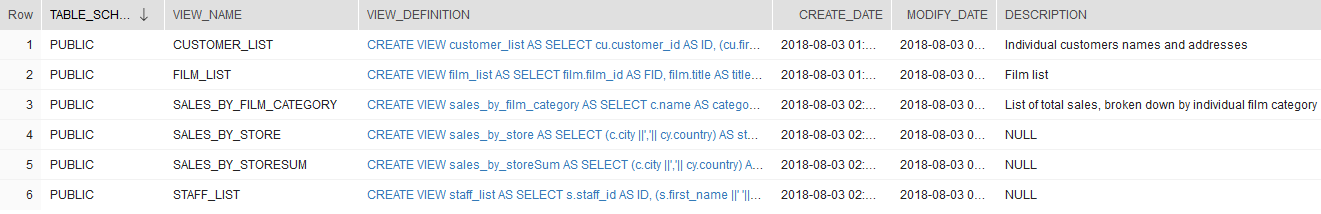

We know that all Data engineers & Data scientists love to use SQL to solve all kinds of data problems, and it gives them a full grip to manipulate the data views.

In recent years, organizations have struggled with processing big data, sets of data large enough to overwhelm commercially available computing systems. And data is one of the leading factors in this transition. Sounds very interesting…Let’s keep scrolling to see what’s next!Īccording to Gartner( my favorite one!), the public cloud services market continues to grow, largely due to the data demands of modern applications and workloads. We are at almost the end of this year running with strange pandemic times, I am sitting down to write my last blog of the year 2020 that shall guide the resources needed to play with your SAP data using Azure services to build a robust data analytics platform on the Snowflake platform.Īs we all know, Santa Clause runs on SAP,and his North pole’s supply chain data is growing every year, and the Elfs IT team started exploring Industry 4.0, Machine learning, and tractions for serverless services, including high-performance data warehouses like a Snowflake, Azure Synapse with fully automated, zero administration, and combined with a data lake so that they could focus on increasing Line of Business(LoB) on SAP S/4HANA without any trouble. The agenda for this blog is to demonstrate SAP data replication to the Snowflake platform to build an independent data warehousing scenario. “Data isn’t the new oil - it’s the new nuclear power.” – Unknown